Case Study 01

Engineering the Digital Core

Deconstructing a monolithic legacy platform and rebuilding it as a cloud-native system engineered for velocity, resilience, and scale.

The Context

A Platform Built on Technical Debt

A mid-to-large software company's mission-critical platform had grown over a decade into a tightly coupled monolithic system. What once powered rapid early growth was now the single biggest constraint on innovation.

Release cycles had slowed to a crawl, infrastructure costs were climbing quarter over quarter, and reliability issues were intensifying under peak load. Engineering teams spent more time on maintenance than on evolving the product.

The Friction Point

Velocity Bottlenecks at Every Layer

Deployment risk and brittle integrations paralyzed the team. Fear of change made every release a high-stakes event.

Tightly coupled architecture preventing independent scaling of core services.

Manual testing cycles consuming days of engineering time before every release.

Scaling limitations causing performance degradation during peak usage periods.

Our Approach

Cloud-Native Transformation

Incremental Strangler Pattern

Rather than a high-risk complete rewrite, we incrementally peeled off specific functionalities into independent microservices. Each migration was validated in production before moving to the next, ensuring zero disruption to existing users.

Containerization & Kubernetes

We standardized all environments with Docker and implemented Kubernetes orchestration for horizontal scalability and fault isolation. This gave engineering teams consistent dev-to-prod parity and eliminated environment-specific failures.

Automated CI/CD Pipelines

We integrated security scanning, automated testing, and progressive rollouts directly into the deployment pipeline. This eliminated every manual release gate and enabled safe, confident daily deployments across all services.

Measurable Results

Impact on Delivery

From monthly to multiple daily releases

Resilient, self-healing infrastructure

Right-sized cloud resource allocation

Fully automated release pipeline

Case Study 02

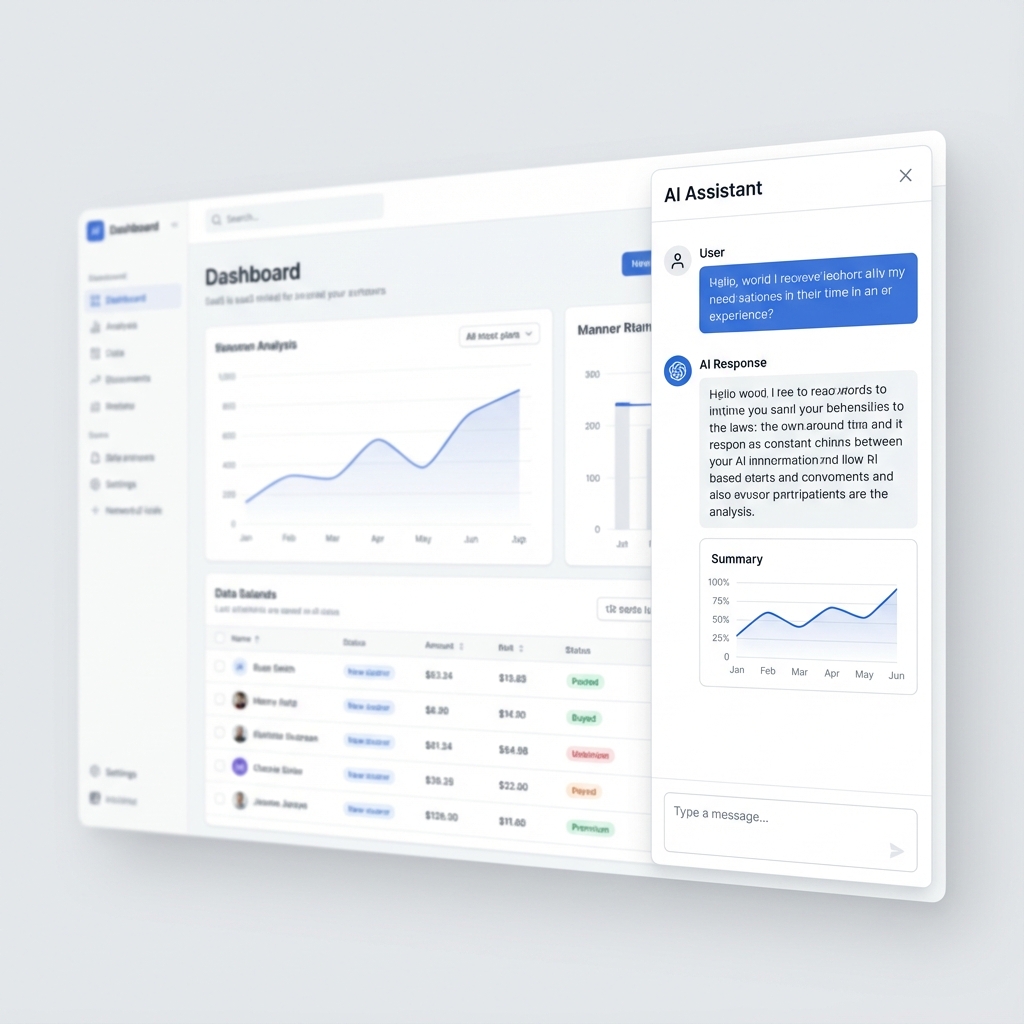

AI Product Integration

Embedding intelligent assistants directly into the user workflow to automate repetitive tasks and drive product differentiation.

The Context

Users Drowning in Manual Work

A project management tool's users were spending significant time on repetitive tasks -- documentation, analysis, and workflow coordination. Product differentiation was plateauing, and user feedback pointed to productivity friction rather than missing features.

The Friction

Manual effort consuming high-value expert time on repetitive tasks.

Inconsistent outputs across different user groups and workflows.

Difficulty scaling human-intensive workflows as the user base grew.

User fatigue with complex, data-heavy interfaces reducing engagement.

The Solution

Embedded AI Assistant

We integrated a secure, fine-tuned LLM that acts as a co-pilot directly within the application, understanding user context with strict guardrails.

Smart Summaries

Auto-generating concise summaries from complex project threads, turning noise into actionable insight.

Assisted Content

Drafting documents, updates, and reports with human-in-the-loop control for quality and accuracy.

Semantic Search

Enabling natural language search across proprietary product data, replacing rigid keyword filters.

Measurable Product Impact

Full Spectrum

Technology Capabilities

Questions

Technology & Software FAQs

We work across the modern technology stack including AWS, Azure, GCP, Kubernetes, Terraform, and serverless architectures. Our teams have deep expertise in React, Node.js, Python, Go, and Java ecosystems, enabling us to modernize legacy platforms and build greenfield cloud-native applications at scale.

Yes. We design cloud-agnostic architectures that prevent vendor lock-in and enable workload portability. Our infrastructure-as-code approach using Terraform and Pulumi allows consistent deployments across AWS, Azure, and GCP, with hybrid connectivity for organizations transitioning from on-premise environments.

We leverage PyTorch, TensorFlow, Hugging Face Transformers, LangChain, and OpenAI APIs for model development and integration. For MLOps, we implement MLflow, Kubeflow, and SageMaker pipelines to ensure reproducibility, monitoring, and continuous retraining of production models.

We follow an API-first integration strategy using REST, GraphQL, and event-driven architectures with Kafka or RabbitMQ. For legacy systems, we implement the strangler fig pattern to incrementally decouple and modernize without disrupting existing business operations or data flows.

API-first means designing and documenting APIs before writing implementation code. We use OpenAPI specifications, contract testing, and automated documentation generation to ensure consistency. This approach accelerates frontend-backend parallelization, improves developer experience, and enables seamless third-party integrations.

Let's Modernize Your Software Platform

Every technology company has unique modernization challenges. We have the engineering depth and case studies to solve them.